I just bought a Pixel 6a to do some testing (I'd previously only been testing on an iphone 13) and when I'm running detections on an image stream I'm getting results at ~ 3.5 FPS whereas on my iphone i'm getting results ~ 31 FPS...

Has anyone else had a similar experience??

Code below for how I'm running on stream:

import 'dart:ui' as ui;

import 'package:camera/camera.dart';

import 'package:flutter/material.dart';

import 'package:ncnn_yolox_flutter/models/kanna_rotate/kanna_rotate_device_orientation_type.dart';

import 'package:ncnn_yolox_flutter/models/pixel_channel.dart';

import 'package:ncnn_yolox_flutter/models/pixel_format.dart';

import 'package:ncnn_yolox_flutter/models/yolox_results.dart';

import 'package:ncnn_yolox_flutter/ncnn_yolox.dart';

import 'package:test_android/src/app.dart';

import '../../main.dart';

import '../widgets/yolox_camera_layout_widget.dart';

class TestPage extends StatefulWidget {

const TestPage({Key? key}) : super(key: key);

@override

State<TestPage> createState() => _TestPageState();

}

class _TestPageState extends State<TestPage> {

late CameraController controller;

KannaRotateDeviceOrientationType get deviceOrientationType =>

controller.value.deviceOrientation.kannaRotateType ??

KannaRotateDeviceOrientationType.portraitUp;

int get sensorOrientation => controller.description.sensorOrientation ?? 90;

double? _screenWidth;

bool processing = false; // for detection camera stream

bool cameraOpen = false; // for camera or body

final ncnn = NcnnYolox();

ui.Image? previewImage; // used in detection camera stream

List<YoloxResults> yoloxResults = [];

@override

void initState() {

super.initState();

initCoco();

controller = CameraController(cameras[0], ResolutionPreset.medium);

controller.initialize().then((_) {

if (!mounted) {

return;

}

setState(() {});

}).catchError((Object e) {

if (e is CameraException) {

switch (e.code) {

case 'CameraAccessDenied':

// Handle access errors here.

break;

default:

// Handle other errors here.

break;

}

}

});

}

@override

void dispose() {

controller.dispose();

super.dispose();

}

@override

void didChangeAppLifecycleState(AppLifecycleState state) {

switch (state) {

case AppLifecycleState.resumed:

case AppLifecycleState.inactive:

print('Lifecycle Inactive!!!!!!!');

//controller.dispose();

_stopImageStream();

setState(() {

processing = false;

cameraOpen = false;

});

break;

case AppLifecycleState.paused:

case AppLifecycleState.detached:

break;

}

}

initCoco() async {

await ncnn.initYolox(

modelPath: 'assets/yolox_coco.bin',

paramPath: 'assets/yolox_coco.param',

autoDispose: true,

nmsThresh: 0.35,

confThresh: 0.3,

targetSize: 416,

);

}

onDetected(

List<YoloxResults> results,

ui.Image image,

) {

setState(() {

yoloxResults = results;

previewImage = image;

});

}

Future<void> _startImageStream() async {

print('Start Stream!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!');

await controller.startImageStream(

(image) {

if (processing) {

return;

}

switch (image.format.group) {

case ImageFormatGroup.unknown:

case ImageFormatGroup.jpeg:

print('not support format');

return;

case ImageFormatGroup.yuv420:

_yuv420(image);

break;

case ImageFormatGroup.bgra8888:

_bgra(image);

break;

}

},

);

}

Future<void> _stopImageStream() async {

print('Stop Stream?????????????????????????????????????????');

controller.stopImageStream();

setState(() {

processing = false;

cameraOpen = false;

});

}

void _yuv420(CameraImage image) {

processing = true;

final stopwatchYuv420sp2Uint8List = Stopwatch()..start();

final yuv420sp = ncnn.yuv420sp2Uint8List(

y: image.planes[0].bytes,

u: image.planes[1].bytes,

v: image.planes[2].bytes,

);

stopwatchYuv420sp2Uint8List.stop();

final stopwatchYuv420sp2rgb = Stopwatch()..start();

final pixels = ncnn.yuv420sp2rgb(

yuv420sp: yuv420sp,

width: image.width,

height: image.height,

);

stopwatchYuv420sp2rgb.stop();

final deviceOrientationType =

controller.value.deviceOrientation.kannaRotateType;

final sensorOrientation = controller.description.sensorOrientation;

final stopwatchKannaRotate = Stopwatch()..start();

final rotated = ncnn.kannaRotate(

pixels: pixels,

pixelChannel: PixelChannel.c3,

width: image.width,

height: image.height,

deviceOrientationType: deviceOrientationType,

sensorOrientation: sensorOrientation,

);

stopwatchKannaRotate.stop();

final stopwatchRgb2rgba = Stopwatch()..start();

final rgba = ncnn.rgb2rgba(

rgb: rotated.pixels!,

width: rotated.width,

height: rotated.height,

);

stopwatchRgb2rgba.stop();

final stopwatchDetect = Stopwatch()..start();

final results = ncnn.detectPixels(

pixels: rotated.pixels!,

pixelFormat: PixelFormat.rgb,

width: rotated.width,

height: rotated.height,

);

stopwatchDetect.stop();

final stopwatchDecodeImageFromPixels = Stopwatch()..start();

ui.decodeImageFromPixels(

rgba,

rotated.width,

rotated.height,

ui.PixelFormat.rgba8888,

(resultsImg) async {

stopwatchDecodeImageFromPixels.stop();

onDetected(results, resultsImg);

processing = false;

final sumMs = stopwatchYuv420sp2Uint8List.elapsedMilliseconds +

stopwatchYuv420sp2rgb.elapsedMilliseconds +

stopwatchKannaRotate.elapsedMilliseconds +

stopwatchRgb2rgba.elapsedMilliseconds +

stopwatchDetect.elapsedMilliseconds +

stopwatchDecodeImageFromPixels.elapsedMilliseconds;

print(

'''====

yuv420sp2Uint8List: ${stopwatchYuv420sp2Uint8List.elapsedMilliseconds}ms

yuv420sp2rgb: ${stopwatchYuv420sp2rgb.elapsedMilliseconds}ms

kannaRotate: ${stopwatchKannaRotate.elapsedMilliseconds}ms

rgb2rgba: ${stopwatchRgb2rgba.elapsedMilliseconds}ms

detect: ${stopwatchDetect.elapsedMilliseconds}ms

decodeImageFromPixels: ${stopwatchDecodeImageFromPixels.elapsedMilliseconds}ms

FPS: ${1000 / sumMs}

====

''',

);

},

);

}

void _bgra(CameraImage image) {

processing = true;

final deviceOrientationType =

controller.value.deviceOrientation.kannaRotateType;

final sensorOrientation = controller.description.sensorOrientation;

final stopwatchKannaRotate = Stopwatch()..start();

final rotated = ncnn.kannaRotate(

pixels: image.planes[0].bytes,

pixelChannel: PixelChannel.c4,

width: image.width,

height: image.height,

deviceOrientationType: deviceOrientationType,

sensorOrientation: sensorOrientation,

);

stopwatchKannaRotate.stop();

final stopwatchDetect = Stopwatch()..start();

final results = ncnn.detectPixels(

pixels: rotated.pixels!,

pixelFormat: PixelFormat.bgra,

width: rotated.width,

height: rotated.height,

);

stopwatchDetect.stop();

final stopwatchDecodeImageFromPixels = Stopwatch()..start();

ui.decodeImageFromPixels(

rotated.pixels!,

rotated.width,

rotated.height,

ui.PixelFormat.bgra8888,

(resultsImg) async {

stopwatchDecodeImageFromPixels.stop();

onDetected(results, resultsImg);

processing = false;

final sumMs = stopwatchKannaRotate.elapsedMilliseconds +

stopwatchDetect.elapsedMilliseconds +

stopwatchDecodeImageFromPixels.elapsedMilliseconds;

print(

'''====

kannaRotate: ${stopwatchKannaRotate.elapsedMilliseconds}ms

detect: ${stopwatchDetect.elapsedMilliseconds}ms

decodeImageFromPixels: ${stopwatchDecodeImageFromPixels.elapsedMilliseconds}ms

FPS: ${1000 / sumMs}

====

''',

);

},

);

}

@override

Widget build(BuildContext context) {

return Scaffold(

appBar: AppBar(

title: Text('Test'),

),

floatingActionButton: cameraOpen

? FloatingActionButton(

onPressed: () {

setState(() {

cameraOpen = !cameraOpen;

_stopImageStream();

});

},

)

: FloatingActionButton(

onPressed: () {

setState(() {

cameraOpen = !cameraOpen;

_startImageStream();

});

},

),

body: cameraOpen

? YoloxCameraLayoutWidget(

previewImage: previewImage,

results: yoloxResults,

labels: cocoLabels,

)

: Center(

child: Text('Body'),

),

);

}

}

extension DeviceOrientationExtension on DeviceOrientation {

KannaRotateDeviceOrientationType get kannaRotateType {

switch (this) {

case DeviceOrientation.portraitUp:

return KannaRotateDeviceOrientationType.portraitUp;

case DeviceOrientation.portraitDown:

return KannaRotateDeviceOrientationType.portraitDown;

case DeviceOrientation.landscapeLeft:

return KannaRotateDeviceOrientationType.landscapeLeft;

case DeviceOrientation.landscapeRight:

return KannaRotateDeviceOrientationType.landscapeRight;

}

}

}

final cocoLabels = [

'person',

'bicycle',

'car',

'motorcycle',

'airplane',

'bus',

'train',

'truck',

'boat',

'traffic light',

'fire hydrant',

'stop sign',

'parking meter',

'bench',

'bird',

'cat',

'dog',

'horse',

'sheep',

'cow',

'elephant',

'bear',

'zebra',

'giraffe',

'backpack',

'umbrella',

'handbag',

'tie',

'suitcase',

'frisbee',

'skis',

'snowboard',

'sports ball',

'kite',

'baseball bat',

'baseball glove',

'skateboard',

'surfboard',

'tennis racket',

'bottle',

'wine glass',

'cup',

'fork',

'knife',

'spoon',

'bowl',

'banana',

'apple',

'sandwich',

'orange',

'broccoli',

'carrot',

'hot dog',

'pizza',

'donut',

'cake',

'chair',

'couch',

'potted plant',

'bed',

'dining table',

'toilet',

'tv',

'laptop',

'mouse',

'remote',

'keyboard',

'cell phone',

'microwave',

'oven',

'toaster',

'sink',

'refrigerator',

'book',

'clock',

'vase',

'scissors',

'teddy bear',

'hair drier',

'toothbrush'

];

yolox camera layout widget:

import 'dart:ui' as ui;

import 'package:flutter/material.dart';

import 'package:ncnn_yolox_flutter/ncnn_yolox_flutter.dart';

class YoloxCameraLayoutWidget extends StatelessWidget

with WidgetsBindingObserver {

YoloxCameraLayoutWidget({

Key? key,

this.previewImage,

required this.results,

required this.labels,

}) : super(key: key);

final ui.Image? previewImage;

final List<YoloxResults> results;

YoloxResults? produceResults;

final List<String> labels;

@override

Widget build(BuildContext context) {

return LayoutBuilder(

builder: (context, constraints) {

if (previewImage == null) {

return const Center(

child: CircularProgressIndicator(),

);

}

final cameraWidget = FittedBox(

child: SizedBox(

//height: _screenHeight,

//width: _screenWidth,

width: previewImage!.width.toDouble(),

height: previewImage!.height.toDouble(),

child: CustomPaint(

painter: YoloxResultsPainter(

image: previewImage!,

results: results,

labels: labels,

),

),

),

);

return cameraWidget;

},

);

}

}

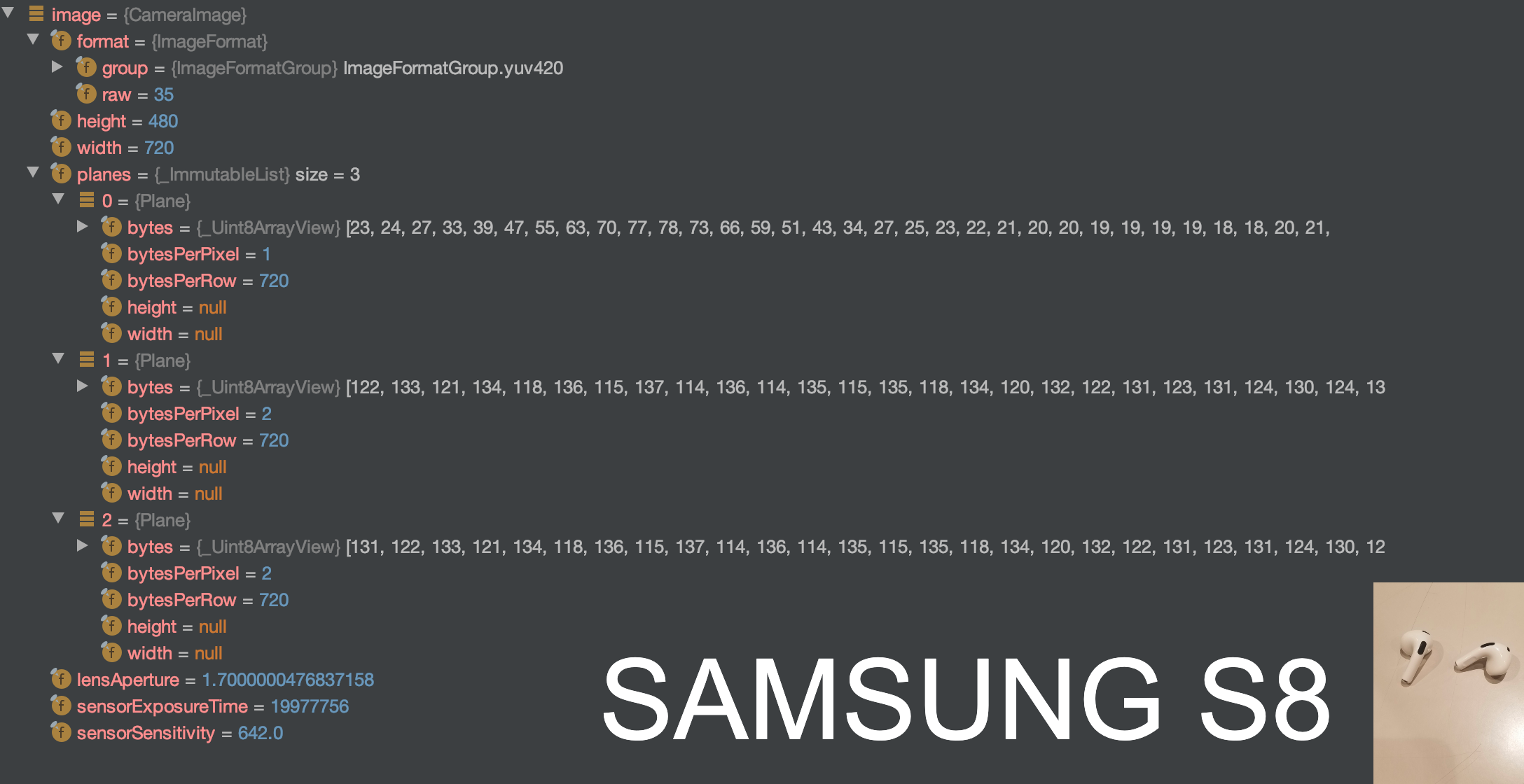

Samsung S8

Samsung S8

How I convert YOLOx Model

How I convert YOLOx Model